What are the uses of recurrent neural networks when using them with Reinforcement Learning?

I do know that feedforward multi-layer neural networks with backprop are used with Reinforcement Learning as to help it generalize the actions our agent does. This is, if we have a big state space, we can do some actions, and they will help generalize over the whole state space.

What do recurrent neural networks do, instead? To what tasks are they used for, in general?

看答案

Recurrent Neural Networks, RNN for short (although beware that RNN is often used in the literature to designate Random Neural Networks, which effectively are a special case of Recurrent NN), come in very different "flavors" which causes them to exhibit various behaviors and characteristics. In general, however these many shades of behaviors and characteristics are rooted in the availability of [feedback] input to individual neurons。 Such feedback comes from other parts of the network, be it local or distant, from the same layer (including in some cases "self"), or even on different layers (*). Feedback information it treated as "normal" input the neuron and can then influence, at least in part, its output.

不像 back propagation which is used during the learning phase of a Feed-forward Network for the purpose of fine-tuning the relative weights of the various [Feedfoward-only] connections, FeedBack in RNNs constitute true a input to the neurons they connect to.

One of the uses of feedback is to make the network more resilient to noise and other imperfections in the input (IE。 输入 to the network as a whole). The reason for this is that in addition to inputs "directly" pertaining to the network input (the types of input that would have been present in a Feedforward Network), neurons have the information about what other neurons are "thinking". This extra info then leads to Hebbian learning, i.e. the idea that neurons that [usually] fire together should "encourage" each other to fire. In practical terms this extra input from "like-firing" neighbor neurons (or no-so neighbors) may prompt a neuron to fire even though its non-feedback inputs may have been such that it would have not fired (or fired less strongly, depending on type of network).

An example of this resilience to input imperfections is with associative memory, a common employ of RNNs. The idea is to use the feeback info to "fill-in the blanks".

Another related but distinct use of feedback is with inhibitory signals, whereby a given neuron may learn that while all its other inputs would prompt it to fire, a particular feedback input from some other part of the network typically indicative that somehow the other inputs are not to be trusted (in this particular context).

Another extremely important use of feedback, is that in some architectures it can introduce a temporal element to the system。 A particular [feedback] input may not so much instruct the neuron of what it "thinks" [now], but instead "remind" the neuron that say, two cycles ago (whatever cycles may represent), the network's state (or one of its a sub-states) was "X". Such ability to "remember" the [typically] recent past is another factor of resilience to noise in the input, but its main interest may be in introducing "prediction" into the learning process. These time-delayed input may be seen as predictions from other parts of the network: "I've heard footsteps in the hallway, expect to hear the door bell [or keys shuffling]".

(*) BTW such a broad freedom in the "rules" that dictate the allowed connections, whether feedback or feed-forward, explains why there are so many different RNN architectures and variations thereof). Another reason for these many different architectures is that one of the characteristics of RNN is that they are not readily as tractable, mathematically or otherwise, compared with the feed-forward model. As a result, driven by mathematical insight or plain trial-and-error approach, many different possibilities are being tried.

This is not to say that feedback network are total black boxes, in fact some of the RNNs such as the Hopfield Networks are rather well understood. It's just that the math is typically more complicated (at least to me ;-) )

I think the above, generally (too generally!), addresses devoured elysium's (the OP) questions of "what do RNN do instead", and the "general tasks they are used for". To many complement this information, here's an incomplete and informal survey of applications of RNNs. The difficulties in gathering such a list are multiple:

- the overlap of applications between Feed-forward Networks and RNNs (as a result this hides the specificity of RNNs)

- the often highly specialized nature of applications (we either stay in with too borad concepts such as "classification" or we dive into "Prediction of Carbon shifts in series of saturated benzenes" ;-) )

- the hype often associated with neural networks, when described in vulgarization texts

Anyway, here's the list

- modeling, in particular the learning of [oft' non-linear] dynamic systems

- Classification (now, FF Net are also used for that...)

- Combinatorial optimization

Also there are a lots of applications associated with the temporal dimension of the RNNs (another area where FF networks would typically not be found)

- 运动检测

- load forecasting (as with utilities or services: predicting the load in the short term)

- signal processing : filtering and control

智能推荐

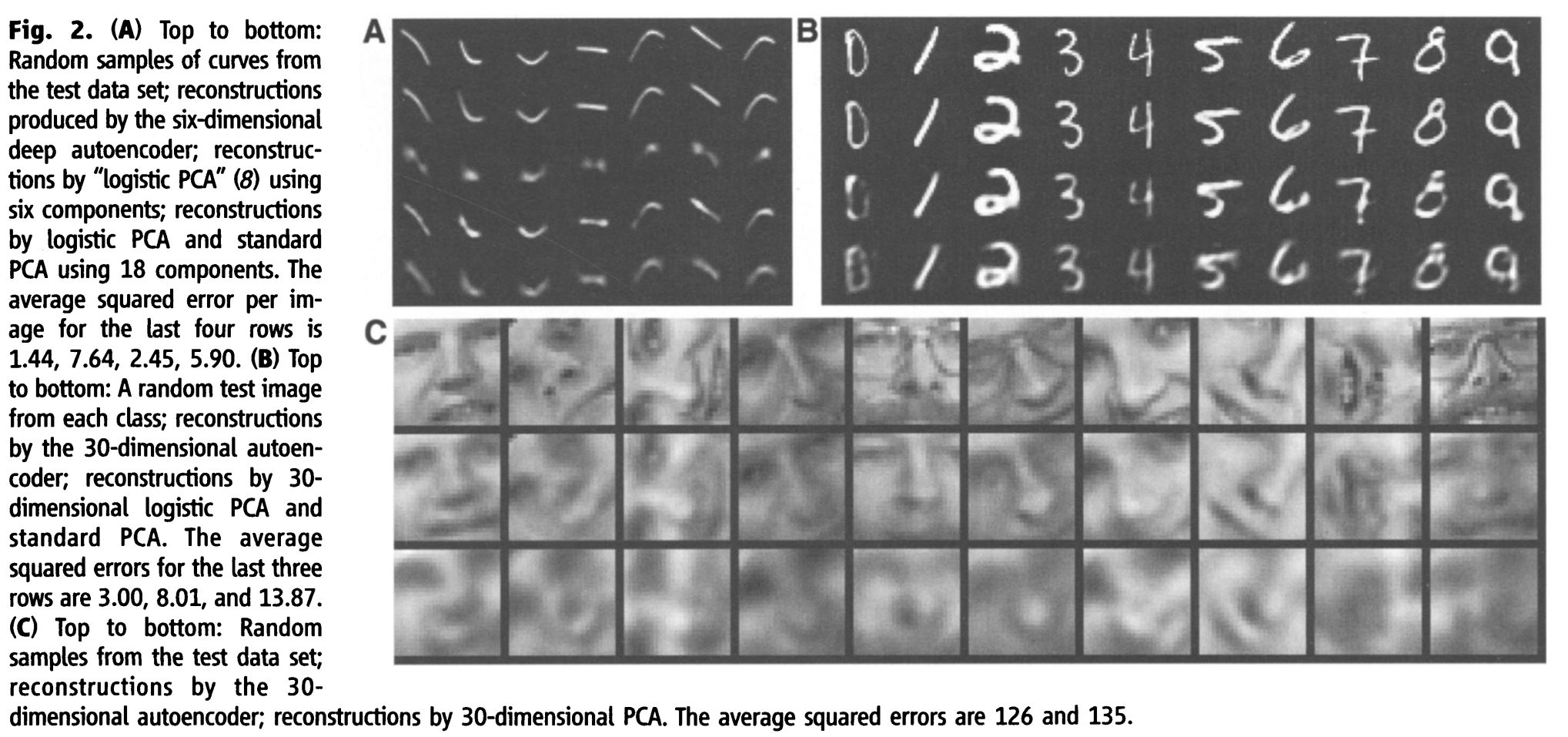

[论文翻译]Reducing the Dimensionality of Data with Neural Networks

论文来源:Reducing the Dimensionality of Data with Neural Networks 通过训练一个具有小中心层的多层神经网络重构高维输入向量,可以将高维数据转换为低维码。梯度下降可以用来微调这种“自动编码器”网络的权值,但只有当初始权值接近一个好的解决方案时,这种方法才能很好地工作。我们描述了一种有效的初始化权值的方法,它允许深度自动编...

[论文翻译]Reducing the Dimensionality of Data with Neural Networks

论文题目:Reducing the Dimensionality of Data with Neural Networks 论文来源:10.1126/science.1127647 翻译人:BDML@CQUT实验室 Reducing the Dimensionality of Data with Neural Networks 利用神经网络降低数据的维度 G. E. Hinton* and R. ...

deepai新课代码The Hello World of Deep Learning with Neural Networks的tf1.0版本

伴随着Tensorflow2.0的发布,前段时间吴恩达在Coursera上发布了配套新课《tensorflow:从入门到精通》,主要由Laurence Moroney讲授,共四周的内容,课程如图所示。 在第一课中,老师实现了一个简单的神经元,并在colab上可以在线运行。代码如下: 你可以直接在colab上运行,是没有问题的,但是由于很多时候都打不开colab,所以我在本地环境下试了一波。结果各种...

论文阅读:A Critical Review of Recurrent Neural Networks for Sequence Learning

论文阅读:A Critical Review of Recurrent Neural Networks for Sequence Learning 2016年04月23日 10:44:41 阅读数:6297 作者: Zachary C. Lipton UCSD 一、论文所解决的问题 现有的关于RNN这一类网络的综述太少了,并且论文之间的符号并不统一,本文就是为了RNN而作的综述 二、论文的内容 (...

<A Critical Review of Recurrent Neural Networks for Sequence Learning>阅读笔记

最近要学习RNN相关知识,根据知乎的建议,首先阅读了这篇经典论文。边读边想遍记录。 一、3个问题 1. 为什么一定要是序列模型? 像SVM、LR、前向反馈网络是建立在 “独立”假设的基础上,更多模型则是人为地去构造前后顺序。但即使这样,上述模型仍然不能解决长时间序列的依赖问题。比如语音或文本识别中的长句子场景。 所以,这种有时间或顺序性的模型,是不能用几个分类器或学习模型串...

猜你喜欢

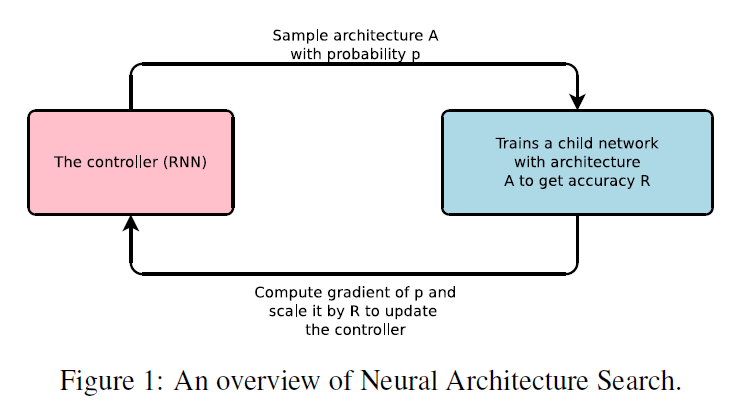

《Neural Architecture Search with Reinforcement Learning》翻译

原文:https://arxiv.org/abs/1611.01578 Neural Architecture Search with Reinforcement Learning ABSTRACT Neural networks are powerful and flexible models that work well for many diffi-cult learning tasks i...

NEURAL ARCHITECTURE SEARCH WITH REINFORCEMENT LEARNING

NEURAL ARCHITECTURE SEARCH WITH REINFORCEMENT LEARNING 本文提出了一种基于梯度的神经结构搜索方法(见图1) 我们的工作是基于这样的观察:神经网络的结构和连接性通常可以由一个可变长度的字符串来指定。因此,可以使用一个递归网络(控制器)来生成这样的字符串。在真实数据上训练由字符串指定的网络——“子网络&rdquo...

自定义类加载器

自定义类加载器 我们如果需要自定义类加载器,只需要继承ClassLoader类,并覆盖掉findClass方法即可。 自定义文件类加载器 自定义网络类加载器 热部署类加载器 当我们调用loadClass方法加载类时,会采用双亲委派模式,即如果类已经被加载,就从缓存中获取,不会重新加载。如果同一个class被同一个类加载器多次加载,则会报错。因此,我们要实现热...

用户界面和兼容性测试

用户界面测试 1 、导航测试 导航直观 Web系统的主要部分可通过主页存取 Web系统不需要站点地图、搜索引擎或其他的导航帮助 Web应用系统的页面结构、导航、菜单、连接的风格一致 2 、图形测试 图形有明确的用途 所有页面字体的风格一致。 背景颜色与字体颜色和前景颜色相搭配。 图片的大小减小到 30k 以下 文字回绕正确 3 、内容测试 Web应用系统提供的信息是正确的 信息无语法或拼写错误 可...

基于ECS部署LAMP环境搭建Drupal网站,云计算技术与应用报告

实验环境: 建站环境:Windows操作系统,基于ECS部署LAMP环境,阿里云资源, Web服务器:Apache,关联的数据库:MySQ PHP:Drupal 8 要求的PHP版本為7.0.33的版本 实验内容和要求:基于ECS部署LAMP环境搭建Drupal网站,drupal是一个好用且功能强大的内容管理系统(CMS),通常也被称为是内容管理框架(CMF),由来自全世界各地的开发人员共同开发和...

问答精选

How we can create Dataproc cluster through rest API or http request?

I am new in python, Here I want to create dataproc cluster using http request. I am following below dataproc documentation where they mentioned in REST API section. see below https://cloud.google.com/...

AddWithValue method on ASP.NET

I am using AddStringWithValue method in ASP.NET using C# My HTML code is My C# code for the method is: The problem is, it is giving red underline under email and password. Shouldn't we identify them w...

How to apply css using a condition?

I'm trying to apply this css: this works well, the problem is that the web app can set a class on the body called white-content, if the white-content class is setted, then I can't see the text of h2, ...

Tile game collision detection with sprite moving to arbitary (x,y)

So I am struggling with some logic for collision detection in my game. I have a grid of tiles(images), all representative of a value in a 2D array, so the location of tile N would be (column m, row n)...

Kotin sort by descending then ascending

Im trying to order a list on multiple parameters.. for example, one value descending, second value ascending, third value descending. is there a way like this to do it? (i know is incorrect) people = ...

相关问题

- What are the uses of the different join operations?

- What are the best workarounds for known problems with Hibernate's schema validation of floating point columns when using Oracle 10g?

- What are the advantages of using an ExecutorService?

- What are the main pain points, when learning Windows Phone 7 programming?

- Learning the Structure of a Hierarchical Reinforcement Task

- What are the merits of using tt, i, b, big, and small tags?

- What are the pitfalls of using .NET RIA Services in Silverlight?

- What are the risks of using SharePoint Designer in a production environment?

- What are the advantages of RTSP?

- What are the advantages of writing a Maven plugin in Groovy compared with Java?

相关文章

- The Unreasonable Effectiveness of Recurrent Neural Networks

- PredRNN: Recurrent Neural Networks for Predictive Learning using Spatiotemporal LSTMs

- A Critical Review of Recurrent Neural Networks for Sequence Learning

- Fundamentals of Deep Learning – Introduction to Recurrent Neural Networks

- On the difficulty of training Recurrent Neural Networks

- AUTOMATIC SPEECH EMOTION RECOGNITION USING RECURRENT NEURAL NETWORKS WITH LOCAL ATTENTION

- 论文笔记12:Building Adaptive Tutoring Model using Artificial Neural Networks and Reinforcement Learning

- Chains of Reasoning over Entities, Relations, and Text using Recurrent Neural Networks

- Exploring the teaching of deep learning in neural networks

- 《Collaborative Filtering with Recurrent Neural Networks》阅读

热门文章

推荐文章

相关标签

推荐问答

- Selecting non-highlighted rows in excel

- How can I append data from a old dataframe onto a new, blank dataframe

- How to turn every 0 into a -1 in a array list in the fastest way?

- Accessing JSON Body with C#

- Spring Security: isAuthenticated using Ajax

- Multiple MS SQL databases as one datasource

- Is it possible to remove from an indexed data structure and avoid shifting at the same time?

- Cognos LIKE function problems

- Regression plot is wrong (python)

- Unable to bind a ResourceDictionary item to Rectangle.Child